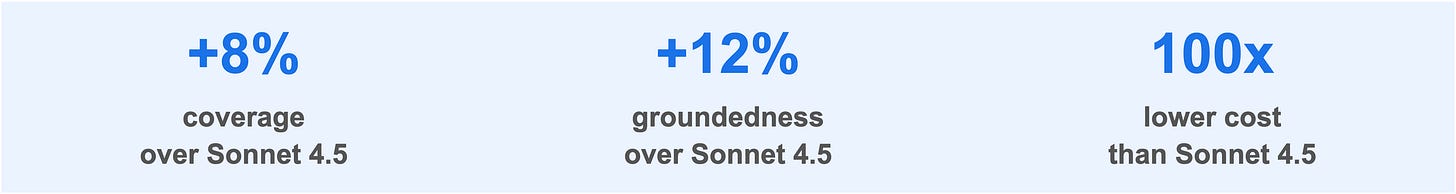

Case Study: Aurasell builds an 8B model for extracting information from websites outperforming Sonnet 4.5 by 8%

With Oumi’s technology, Aurasell's custom AI beat Anthropic's Sonnet 4.5 at extracting information from webpages by 8% in coverage and 12% in groundedness

By Stefan Webb

Problem

Aurasell is an AI-first CRM platform whose core offering includes a research agent that builds custom value pyramids for any target customer. The agent surfaces corporate objectives, strategic initiatives, and business challenges by running a fixed set of web search queries, then passes the results to an LLM that extracts and organizes the relevant details into a structured output.

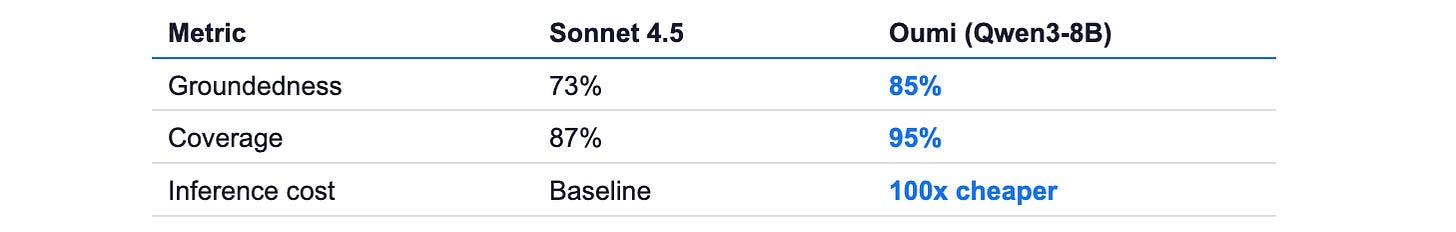

Two metrics define success for this task: groundedness, meaning every claim in the output must be supported by the source material, and coverage, meaning the model should capture all relevant information present in the search results. Their existing system relied on Sonnet 4.5, which achieved acceptable quality but posed significant challenges for scale. As Aurasell’s customer base grew, the cost and latency of running a large frontier model on every research request became unsustainable.

Solution

Oumi partnered with Aurasell to build a custom fine-tuned model optimized specifically for their information extraction task. Since Aurasell was unable to share example outputs or their full system prompt, the team took a ground-up approach to data generation and evaluation.

Using the list of search queries provided by Aurasell, Oumi generated web search results for approximately 3,000 companies. A large frontier model was then used to produce 2,000 high-quality output summaries as training data. To measure performance, two custom LLM judges were created: a groundedness judge to detect hallucinations and unsupported claims, and a coverage judge to penalize summaries that missed key information.

Iteration on both the evaluation framework and the training approach proved critical. Early judges measuring conciseness and clarity inadvertently rewarded shorter, less useful summaries. Replacing these with the coverage metric corrected that bias.

Outcome

The final 8B fine-tuned model, built on Qwen3 8B, outperformed Sonnet 4.5 on both key metrics (see table above). The custom model also approached Opus-level groundedness while surpassing it in coverage—all at a fraction of the cost and latency.

The bottom line: By moving to an 8B model, Aurasell can now run their research agent at scale, delivering faster results to sellers without compromising on quality.

What’s next

With Oumi’s technology, DMG were able to build a small custom AI model that solved pressing business needs. Theirs, however, is just one use case. Many other enterprises are discovering the benefits of custom AI models and the ease and economy of model development that the Oumi Platform makes possible.

Why not try it out today and see for yourself? You only need to come with your prompt and the Oumi Agent will take it from there!