Oumi can now host your Custom AI Models with a single click (and other news)

Updates for Week of May 11, 2026

By Stefan Webb

🚀 It has been just over a month since we launched the Oumi Platform and we’ve been thrilled to see what you all are using it to build - over 2,000 users from diverse industries have signed up and started solving real, pressing business problems (more about that in the case study below).

But we haven’t laid on our laurels, of course. In our first newsletter, Specialized Intelligence, we’d like to tell you about exciting new developments on the Oumi Platform.

Specialized Intelligence

First, what exactly is Specialized Intelligence and why should you care? Specialized Intelligence is the exact opposite of general purpose intelligence. Examples of general purpose intelligence include frontier LLMs like GPT-5.4, Opus 4.7, and DeepSeek V4.

These models are satisfactory at a wide range of tasks without excelling in any particular one. While good for developing prototypes, builders typically hit a road-block. API costs balloon, rate-limits are hit, latencies are long, and the final metrics don’t match those needed for in-production applications. These are problems that cannot be solved by clever context engineering.

“Custom AI that enterprises fully own and control is not just an advantage, it is the only way for them to stay relevant. Enterprises are maturing in their use of GenAI and quickly realizing it. They are acting on it. The wave from rented general purpose intelligence to fully owned specialized intelligence is quickly growing, even when current tools are lacking.” — Manos Koukoumidis, CEO @ Oumi

Specialized Intelligence, on the other hand, typically involves smaller LLMs, like Qwen-3.6, Gemma 4, and Phi 4 Mini. These models excel at specific tasks, exceeding the performance (accuracy, groundedness, etc.) of models like GPT-5.4 that are one-hundred times as large!

Because they are two-orders-of-magnitude smaller, inference costs can be two-orders-of-magnitude smaller. Because they can be run on-edge, on-premise, or self-hosted, rate-limits are circumvented. Likewise, inference latency can be half or more. We demonstrate small specialized models outperforming frontier LLMs on our blog.

Read our Vision Statement on “The Case for Specialized Intelligence”.

What’s new on the Oumi Platform

Managed Inference: Deploy your custom trained models on the Oumi Platform

You can host your fine-tuned models directly from the Oumi Platform (in Beta) and start inference immediately

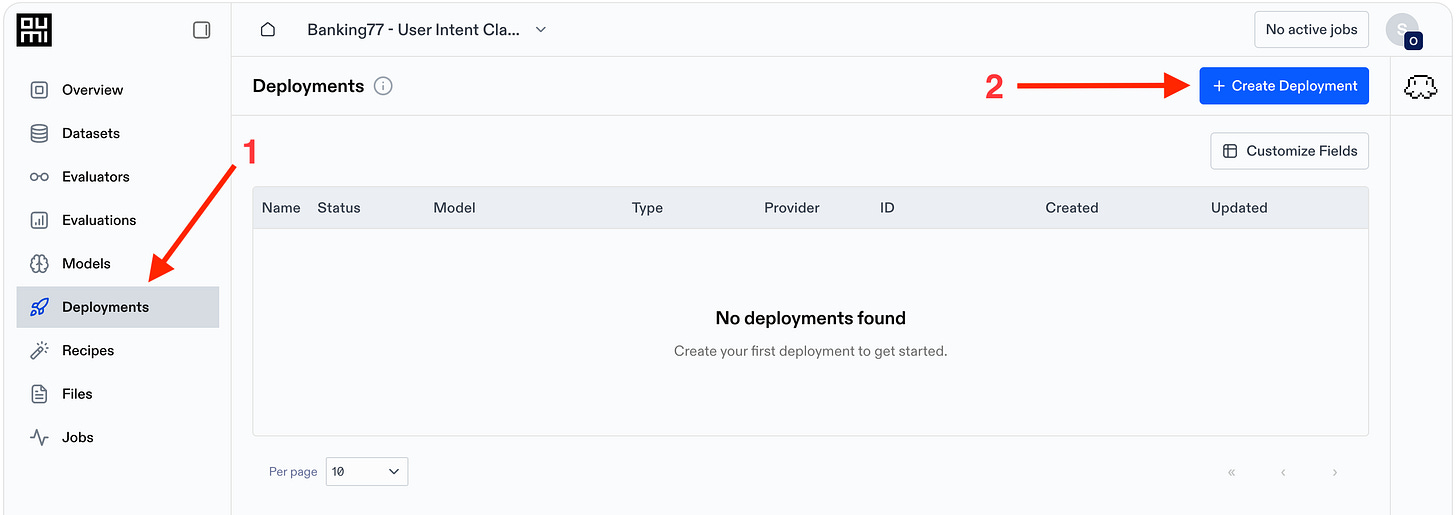

Click on the new Deployments tab and then select “Create Deployment”, following the dialogs

We host an Open AI-compatible endpoint for you, accessible with an Oumi API key

New open source models available

Qwen 3.6/3.5 27B with LoRA fine-tuning

Gemma 4 2B, 4B with full-weight fine-tuning

Don’t see the model you need? Let us know at contact@oumi.ai

New data quality tests

Oumi now flags conversations with no user message and conversations where a system message appears anywhere other than the start.

Make it easier to identify noisy or invalid examples before using a dataset for evaluation, synthesis, or training.

Specialized Model of the Week

Triaging customer support tickets with a sub-1B Small Language Model that Beats GPT-5.4

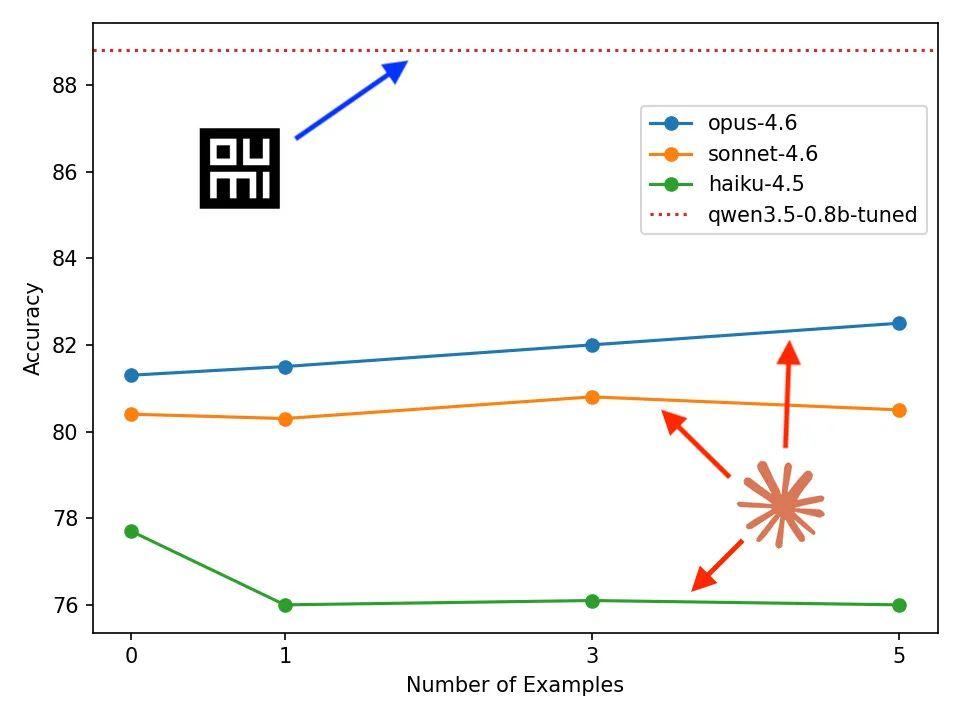

In a recent blog post, “Building Customer Support with a sub-1B Small Language Model that Beats GPT-5.4”, we show how to build a custom 0.8B-parameter model with the Oumi platform for triaging customer support tickets outperforming frontier models like GPT-5.4 and Opus 4.6. For accuracy, it outperforms Opus 4.6 by 6.3% (see figure below) and GPT 5.4 by 8.4%. What’s more, it runs on a laptop (or even an iPhone), costs one hour and a few dollars to train, and ten minutes of human time, and we did it entirely by prompting the Oumi Agent—“look ma, no code!” Read more via the link.

Customer Story

DMG achieves 6% higher quality and 100x lower costs for invoice validation

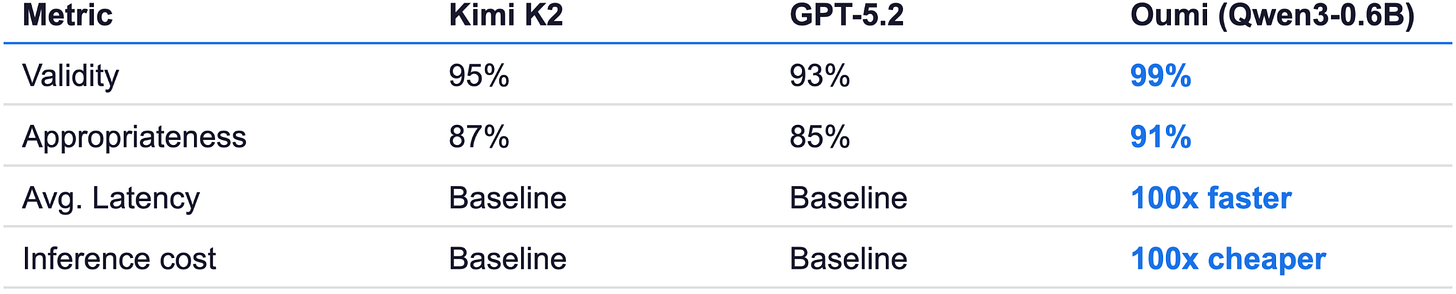

In another recent post, we analyzed how DMG built specialized SLMs with Oumi to solve their business needs. DMG is an intermediary between contractors (carpenters, plumbers, electricians, etc.) and project managers. They have to validate invoices from these constructors, both to prevent fraud and also to ensure they are completed correctly.

DMG built a 0.6B-parameter model with Oumi for validating invoices that outperformed frontier models like GPT-5.2 and Kimi K2 (see table below). Read more via the link.

What have we been up to?

The last few weeks have been quite busy for Oumi. We have sponsored the Builder & Brews meetup, AI Dev 26 x SF (DeepLearning.ai), and the AI Agent Conference. Follow us on LinkedIn and Luma to stay updated on our upcoming events.

Until next time

The Oumi Platform continues to grow stronger — more users, more features, more business problems solved in record time! We look forward to your feedback - if you’d like to get in touch, drop us a line via our website.

Future newsletter will cover, in addition to applications, case studies, and platform updates, a discussion of recent news and research related to SLMs - subscribe now to stay in the loop!

Until then, Happy Building and May the Vibes be With You! ✨