Blog

Insights on custom AI models, open-source ML, and the future of specialized intelligence.

Case Study: Aurasell builds an 8B model for extracting information from websites outperforming Sonnet 4.5 by 8%

With Oumi’s technology, Aurasell's custom AI beat Anthropic's Sonnet 4.5 at extracting information from webpages by 8% in coverage and 12% in groundedness

Read PostCase Study: Ada built a real-time guardrail for their agents with 50% fewer false positives

With Oumi's technology, Ada's custom guardrail model beat GPT-4.1 Mini by 4% in accuracy while slashing latency and false positives rate

Read PostCase Study: DMG achieves 6% higher quality and 100x lower costs for invoice validation

With Oumi’s technology, DMG’s custom AI beat GPT5.2 by 6% accuracy and 6% validity at 100x lower cost

Read PostBuilding Customer Support with a sub-1B Small Language Model that Beats GPT-5.4 (Part 1)

Small Language Models occupy a sweet spot in speed and cost between frontier LLMs and BERT models for a wide range of NLP tasks

Read Post

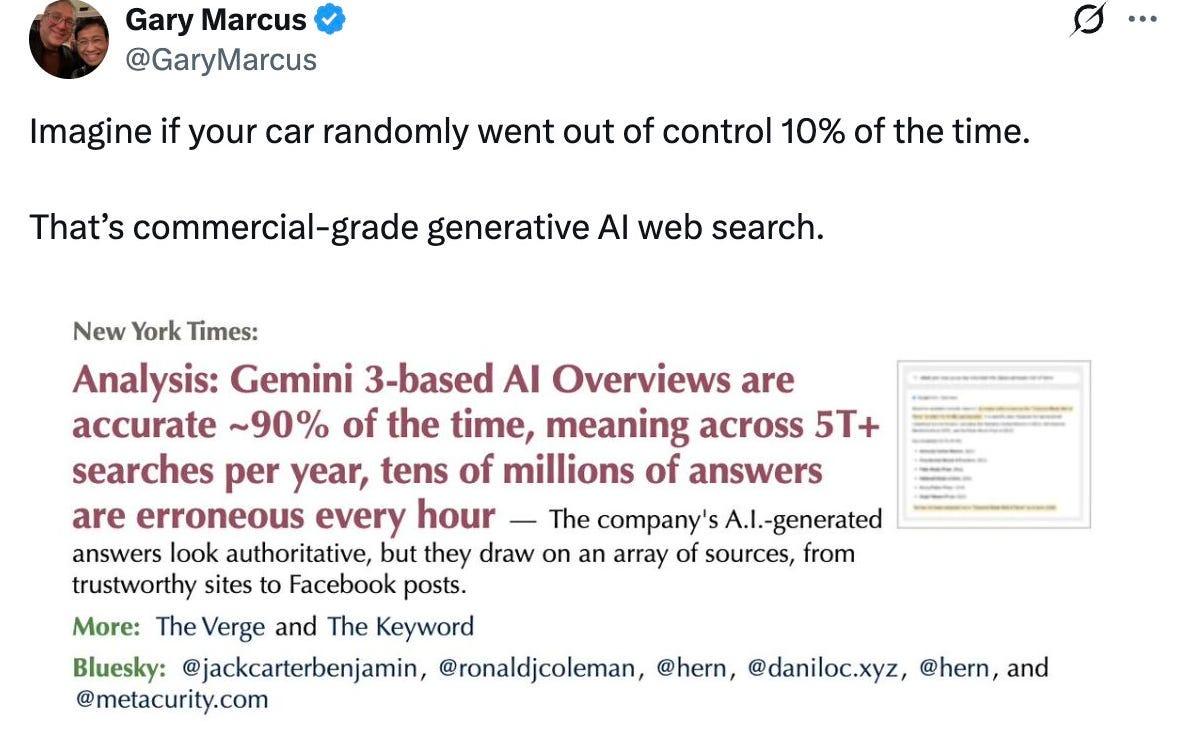

Oumi’s Study Finds 50% of AI Overviews Untrustworthy

A not-so-simple study of SimpleQA and AI search results

Read Post

The Era of General Purpose AI Is Over

General-purpose models were built for the average.

Read PostThe Case for Specialized Intelligence

Our vision for AI at Oumi.ai

Read PostLambda and Oumi partner for end-to-end custom model development

Enterprises can now build and deploy custom models for their specific use cases 100x faster, with 10x better cost efficiency, and superior accuracy

Read Post

Wrapping Up 2025, Looking Ahead to 2026

That’s a wrap on 2025. Hello 2026. 🎉

Read PostOumi v0.5.0: Data Synthesis, OpenEnv, Hyper-param Tuning

Major new features!

Read Post

DCVLR Competition Results: Data Curation for Vision-Language Reasoning

Read Post

Less (Data) is More for Fine-tuning

1000 Samples or Less for Amazing Fine-tuning

Read Post

Why Less is More for Fine-Tuning

What is the evidence for successful fine-tuning with small data?

Watch on Substack

Small Fine-tuned Models are All You Need

But the devil is in the details—how can you get them right?

Read Post

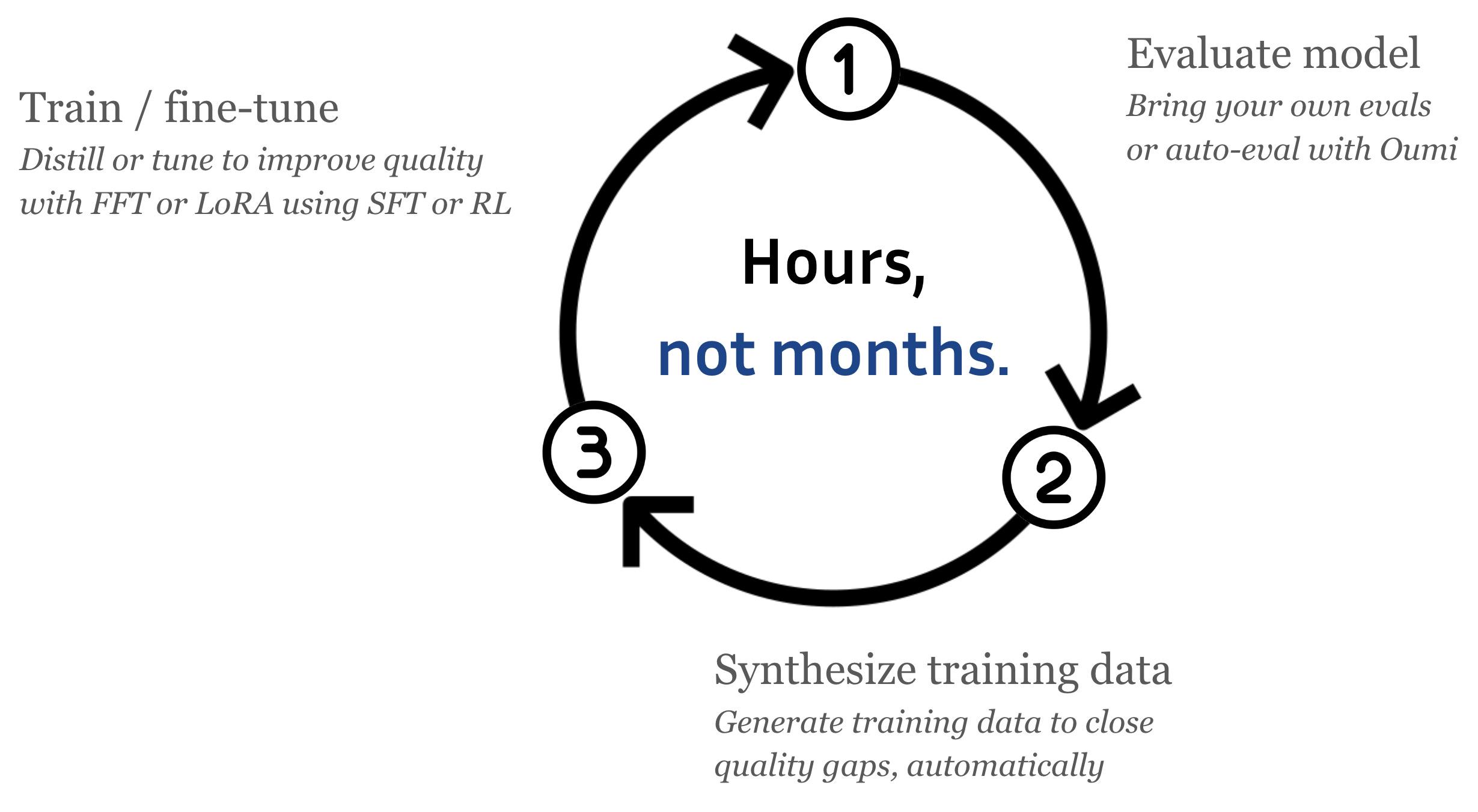

Hours, Not Months – The Custom AI Era is Now

Read Post

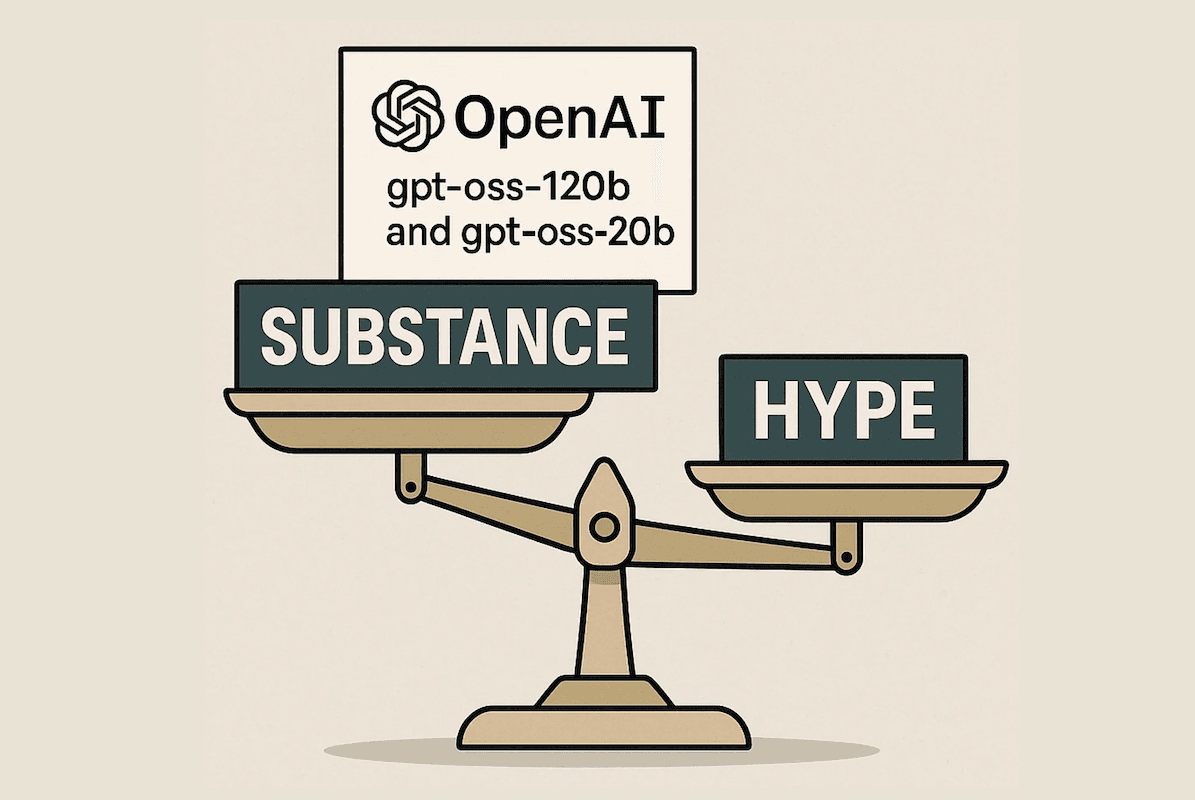

OpenAI Just Dropped Two Massive Open-weight Models — But How Do We Separate The Reality From The Hype?

Evaluating GPT-OSS-20B and GPT-OSS-120B with LLM-as-a-Judge — strong on truthfulness, but overly conservative refusals hold them back

Read Post

Training Frontier Reasoning VLMs for the 2025 NeurIPS DCVLR Workshop with Oumi

Baseline data curation strategies for the NeurIPS DCVLR competition — synthesis, filtration, and a 37.6% improvement on reasoning benchmarks

Read Post

Compete to Curate Smarter Vision-Language Data—And Win Big at NeurIPS 2025

The NeurIPS 2025 DCVLR competition challenges teams to curate compact, high-impact datasets that improve visual reasoning in small models

Read Post

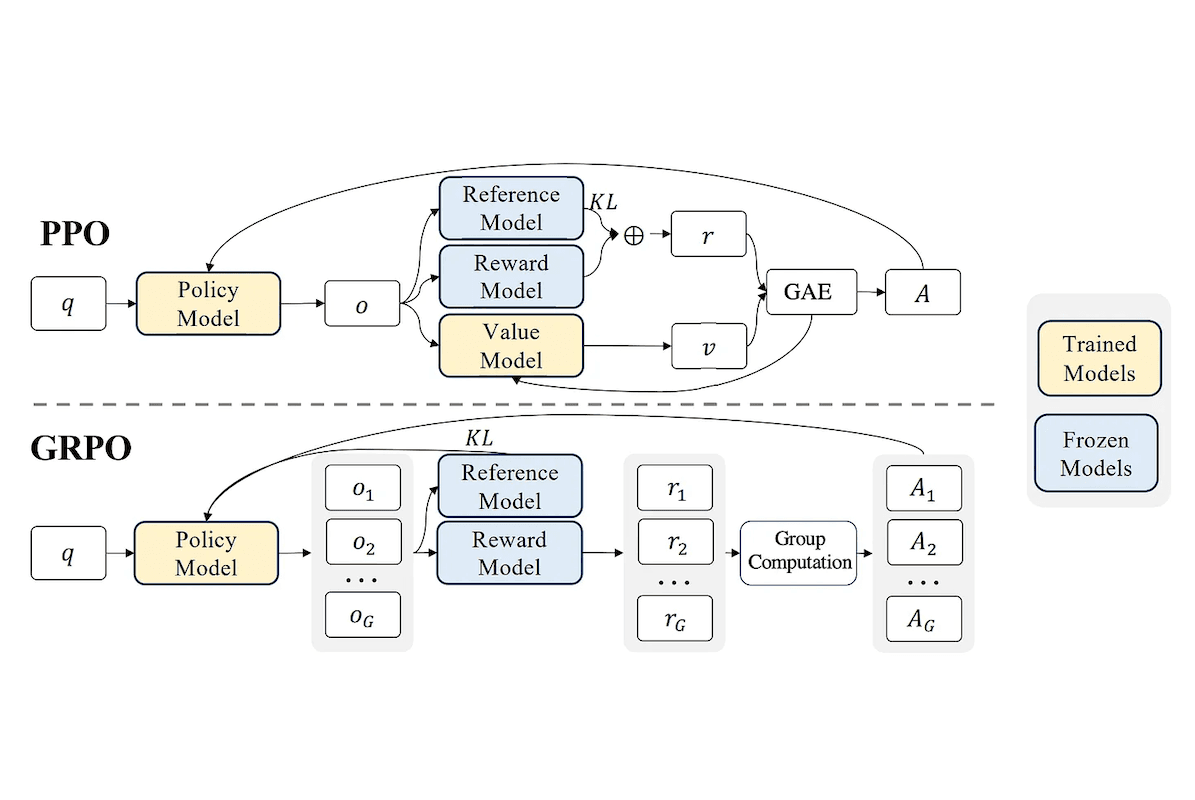

Run GRPO Training in Oumi Using the trl and verl Libraries

How to run Group Relative Policy Optimization in Oumi using trl and verl — simpler, cheaper RL training without a value model

Read Post

Why I Joined Oumi: A Personal Journey Toward Truly Open AI

Most "open" AI models keep training data and code locked away — Stefan Webb on why truly open foundation models matter

Read Post

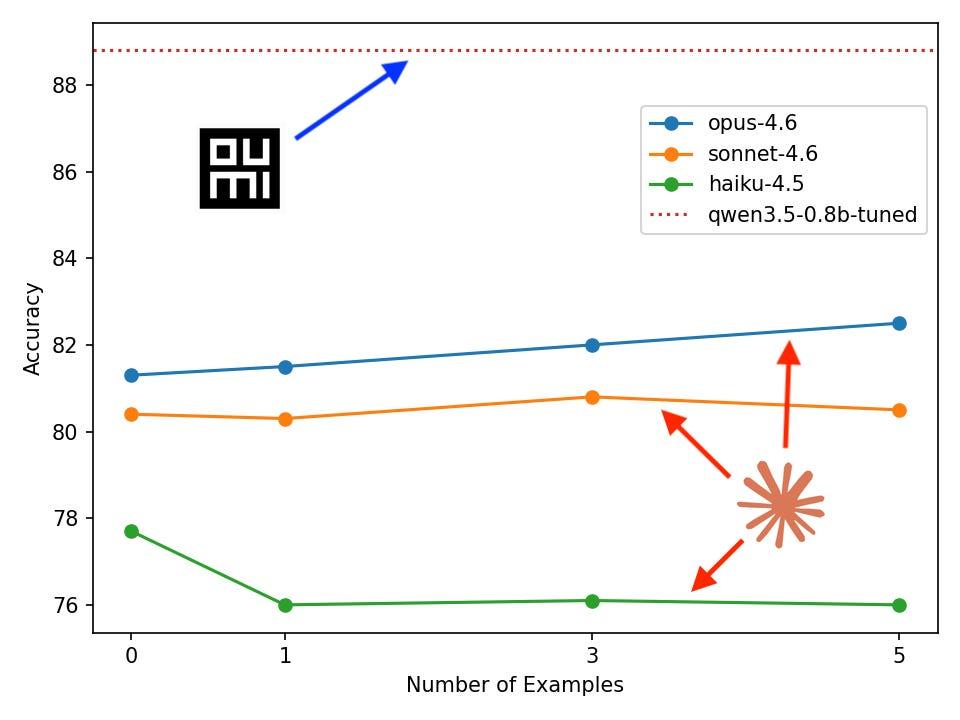

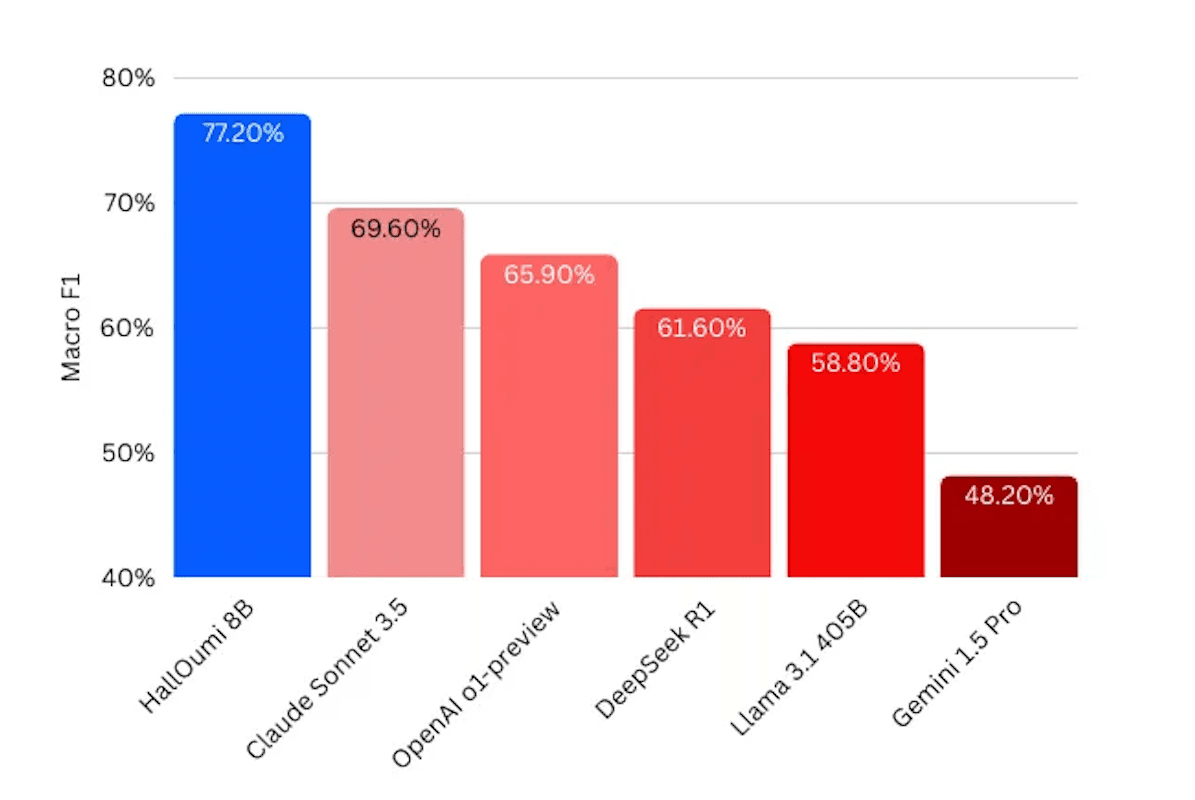

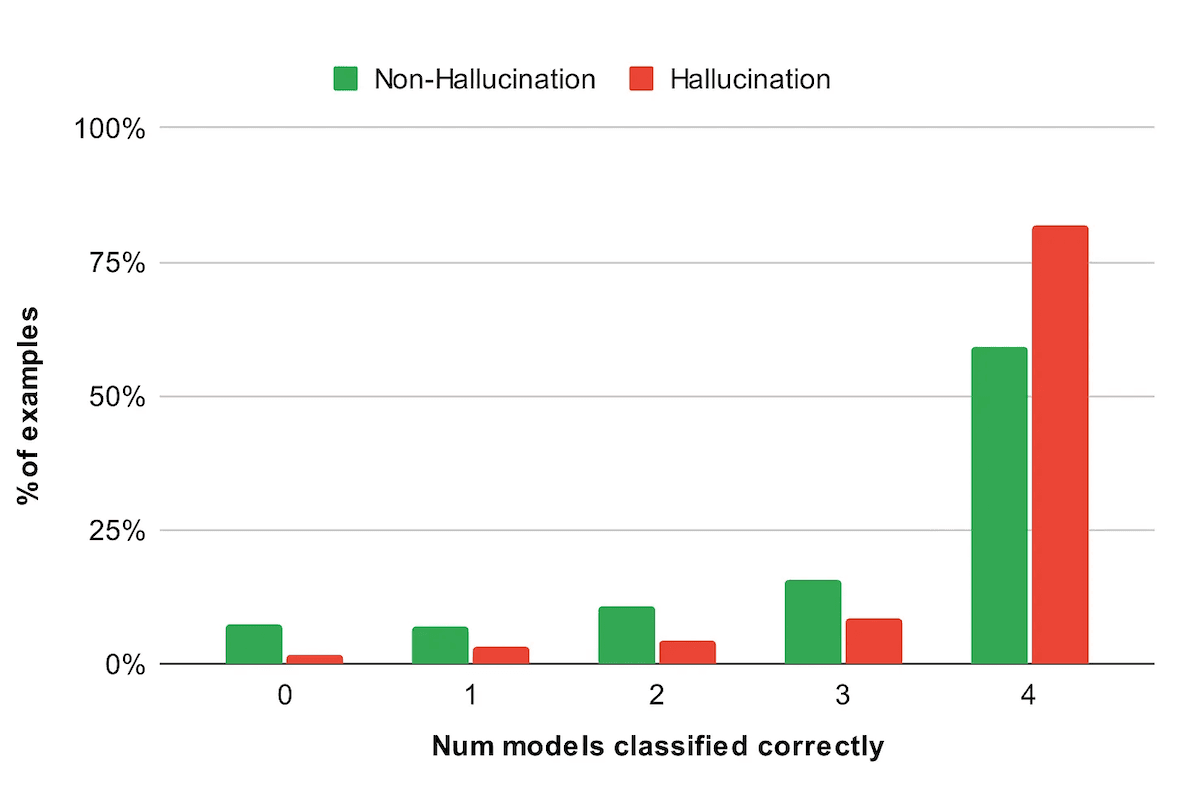

Introducing HallOumi: A State-of-the-Art Claim-Verification Model

An open-source 8B hallucination detection model that outperforms GPT-4 and Claude 3.5 with per-sentence verification and citations

Read Post

Build Custom Evaluations for any Open or Closed Model in just 50 Lines of Code

Build custom LLM evaluations in 50 lines of code — comparing GPT-4o, Claude 3.7, LLaMA 405B, and Gemini as hallucination classifiers

Read Post

If AI Isn't Truly Open, It Will Fail Us: How The Little Fish Can Take On The Sharks

Why closed AI controlled by a handful of corporations will fail us — and how open-source collaboration can build something better

Read Post